As organizations turn to artificial intelligence and machine learning to help eliminate human bias in the recruitment and employee management processes, the opposite can occur. In fact, AI-managed systems can create bigger bias problems. In last week’s issue of CWS 3.0, I discussed the potential risks of using such tools without proper oversight. Here, I cover the actions being undertaken to provide oversight in this arena, both from companies themselves as well as the US government.

Data & Trust Alliance. More than a dozen of the world’s large employers in December announced they are adopting criteria to mitigate data and algorithmic bias in human resources and workforce decisions — including recruiting, compensation and employee development.

The companies are members of the Data & Trust Alliance, which resides within the Center for Global Enterprise, a New York-based nonprofit. Established in 2020, The Data & Trust Alliance brings together businesses and institutions across multiple industries to learn, develop and adopt responsible data and AI practices. The consortium is co-chaired by Ken Chenault, General Catalyst chairman and managing director, and former American Express chairman and CEO; and Sam Palmisano, former IBM chairman and CEO. Jon Iwata, founding executive director, works with a cross-functional team of senior executives selected by their CEOs to identify and drive Alliance projects.

Palmisano discusses the Data & Trust Alliance in a Bloomberg Markets “Balance of Power” video.

The alliance’s first initiative, “Algorithmic Safety: Mitigating Bias in Workforce Decisions,” is designed to help companies evaluate vendors based on their ability to detect, mitigate and monitor algorithmic bias. The safeguards include 55 questions in 13 categories that can be adapted by companies to evaluate vendors on criteria including training data and model design; bias testing methods; bias remediation; transparency and accountability; and AI ethics and diversity commitments.

“As businesses transition from ‘going digital’ to becoming ‘data enterprises,’ it is imperative to unlock the value of data and AI in ways that earn trust with every stakeholder,” says Doug McMillon, president and CEO of Walmart, and the current chairman of Business Roundtable. “Developed and used responsibly, these systems hold the promise of making our workforces more diverse, more inclusive and ultimately more innovative.”

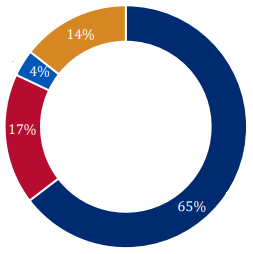

Companies adopting the safeguards include American Express, CVS Health, Deloitte, Diveplane, General Motors, Humana, IBM, Mastercard, Meta, Nielsen, Nike, Under Armour and Walmart. Collectively, they employ more than 3.7 million people. Additional Alliance companies are evaluating the safeguards and are expected to adopt them.

“As we accelerate our digital transformation, we also have to ensure that the way we are using data and AI is consistent with our values,” says Laurie Havanec, chief people officer at CVS Health. “Our work to mitigate bias in human resources and recruiting will help us build more diverse and inclusive teams that we know will produce better results for our companies and our communities.”

EEOC initiative. The US Equal Employment Opportunity Commission in October launched an initiative to ensure that AI and other emerging tools used in hiring and other employment decisions comply with federal civil rights laws that the agency enforces.

“Artificial intelligence and algorithmic decision-making tools have great potential to improve our lives, including in the area of employment,” said EEOC Chair Charlotte Burrows. “At the same time, the EEOC is keenly aware that these tools may mask and perpetuate bias or create new discriminatory barriers to jobs. We must work to ensure that these new technologies do not become a high-tech pathway to discrimination.”

As a component of its initiative, the EEOC intends to:

- Establish an internal working group to coordinate the agency’s work on the initiative;

- Launch a series of listening sessions with key stakeholders about algorithmic tools and their employment ramifications;

- Gather information about the adoption, design, and impact of hiring and other employment-related technologies;

- Identify promising practices; and

- Issue technical assistance to provide guidance on algorithmic fairness and the use of AI in employment decisions.

New York City AI law. New York City in December passed a law that requires employers and employment agencies that use “automated employment decision tools” within the city to conduct independent audits of such tools for bias and provide disclosures to candidates and employees at least 10 business days prior to using automated employment decision tools. Violators may be subject to civil fines of up to $1,500 for each violation.

The Big Apple’s Automated Employment Decision Tools law, which takes effect Jan. 1, 2023, set new standards for employers using AI tools in making employment decisions, The National Law Review reported.

“A silver lining? The AEDT may provide — much needed — guidance for AI developers, auditors, and employers by setting standards for AI used to make employment decisions,” according to the article. “Also, it is anticipated that the AEDT will provide compliant employers ammunition to defend against legal challenges to their use of AI and related discrimination claims.”

Tremendous Potential

Despite its challenges, AI has tremendous potential to help workforce managers and talent acquisition specialists extend their capabilities and reach by automating routine or repetitive tasks and enabling faster processing of data.

“Whether it is to reduce time to hire or improving quality of hire, AI and human collaboration can lead to recruiting efficiencies and effectiveness,” says Susannah Shattuck, head of product at Credo AI. For example, chatbots can help recruiters manage initial conversations with far more candidates than they can handle on their own and AI-driven search and filtering tools can help keep focus on the highest-potential candidates from a pool of thousands for a specific role that needs to be quickly filled. And in our current remote-work world, AI-driven interview tools can help analyze candidate responses and behaviors by analyzing both verbal cues, such as tone of voice, and nonverbal communication, such as facial expressions, during interviews.

“While there is great potential, there is also tremendous risk if these technologies aren’t developed and deployed responsibly that often stems from organizations lacking understanding of their limitations,” Shattuck says. “These risks can vary on a spectrum from potential bias and discrimination to privacy concerns.”

And perhaps most important, keeping bias top-of-mind can prevent systematically prioritizing one group over another which could lead to unfair outcomes for your most important goal — securing the best talent to fill your most-important roles.